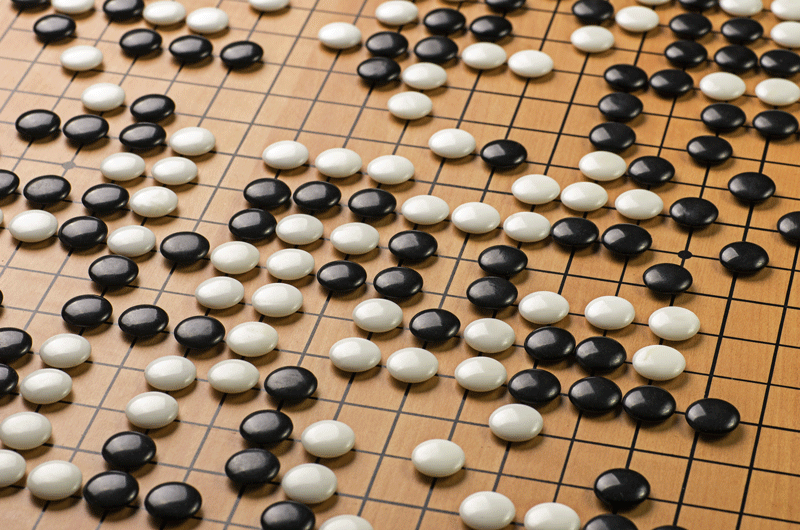

The ancient Chinese game of Go has been a frontier for programmers of artificial intelligence – the 2,500-year-old board game offers so many possible scenarios that it’s been a challenge for computers to master.

The game itself is seemingly simple: players take turns placing small black or white stones on a 19-by-19 grid and try to gain territory on the board by surrounding groups of the other’s stones. Comparatively, there are fewer rules than chess, but the size of the board and the sheer number of outcomes – more than all atoms in the Universe – make the game of Go considerably more abstract. Even good players can’t quantify their exact approach to the game, instead crediting their success to intuition, a feel for the board and an uncanny awareness for the flow of the game.

While machines have had success against humans in chess, checkers, Scrabble and even the gameshow “Jeopardy!,” Go has been harder for artificial intelligence to crack. That is, until Google’s DeepMind AI lab in London developed the AlphaGo project. This new machine has unprecedented amount of computing power and has been programmed with the ability to learn through reinforcement. It grasped the game of Go by playing against itself and growing its skill-set with each successful move. Within two years of development, AlphaGo took on and defeated European Champion, Fan Hui, in a five-game match, winning every game.

This week, AlphaGo is taking on a much tougher opponent, Lee Sedol, the fifth-ranked Go player in the World, in a five-game match worth a million US dollars, taking place in Seoul, South Korea. The first game took place earlier this week and after a competitive three-and-a-half hour bout, Sodol resigned, giving AlphaGo the victory. The result was quite surprising to everyone involved and it’s proving to be quite a historic event, making front-page news across Asia, including Japan, where Go is very popular.

If you want to get in on the action, the remainder of the games can be streamed live or replayed on DeepMind’s YouTube channel.

–Luca Eandi